Cloudflare's Agent Cloud signals that agentic AI infrastructure is now production-ready for business.

Most businesses that deployed AI in 2024 deployed a chatbot. Those chatbots answered questions, deflected tickets, and occasionally frustrated customers. That generation of AI is already being replaced. The shift to AI agents for business — autonomous systems that reason, plan, and execute multi-step workflows without human supervision — is happening faster than most organizations anticipated, and the infrastructure to support it at enterprise scale is now arriving.

Cloudflare's Agents Week 2026, which ran April 13–17, was the clearest signal yet. In five days, the company released production-grade infrastructure across every layer of the agentic AI stack: compute, networking, security, memory, and model access. This was not a product roadmap preview. These tools were shipped. For business leaders evaluating where AI fits in their operations, the question has shifted from whether to adapt to how fast to move — and Cloudflare's announcement makes the infrastructure excuse considerably harder to maintain.

Understanding the business implications requires understanding what Cloudflare released. The Agents Week 2026 announcements were structured around a single thesis: agentic AI is Cloud 2.0, and existing infrastructure was not built for it.

A core challenge for deployed AI agents has been execution cost. Traditional cloud containers are expensive, slow to spin up, and poorly suited to the bursty, on-demand workloads that agents generate. Cloudflare's Dynamic Workers address this directly — an isolate-based compute model that runs AI-generated code in secure, sandboxed environments with startup times measured in milliseconds rather than seconds.

According to independent analysis, Dynamic Workers run AI-generated code approximately 100 times faster than traditional containers. For businesses, this changes the economics of agent deployment materially. Tasks that previously required always-on infrastructure can now execute on-demand, at a fraction of the cost, and scale to millions of concurrent agents without provisioning overhead.

As AI agents gain the ability to access internal systems, databases, and APIs, the security surface expands significantly. Cloudflare's Mesh — announced April 14, 2026 — is a private networking layer that unifies AI agents, human users, and multi-cloud infrastructure into a single secure fabric. It integrates with Cloudflare One's Zero Trust platform, enabling agents to access private infrastructure without exposing it to the public internet.

This matters for enterprises in regulated industries. Healthcare organizations handling patient data, financial services firms processing transaction records, and logistics companies managing supply chain systems all operate under compliance mandates that make unsecured agent access commercially unacceptable. Mesh provides a credible answer to that constraint.

One of the structural risks in early enterprise AI adoption was vendor lock-in — committing to a single model provider and losing flexibility as better models emerged. Cloudflare's updated AI Gateway now provides access to over 70 models across 12+ providers (including OpenAI, Anthropic, Google, Groq, and others) through a single API endpoint and unified billing. Switching between models requires a one-line code change.

Cloudflare also announced native support for the Model Context Protocol (MCP), the emerging standard for how AI agents discover and interact with external tools and services. MCP support means agents built on Cloudflare's infrastructure can plug into a growing ecosystem of business applications without custom integration work for each connection.

Cloudflare's timing is deliberate, and it reflects broader market forces that are converging in 2026 in ways that make agentic AI deployment viable at a scale that was not achievable two years ago. Gartner's August 2025 forecast confirmed the trajectory: 40% of enterprise applications will integrate task-specific AI agents by the end of 2026, up from less than 5% in 2025. The same Gartner analysis projects that agentic AI could drive 30% of enterprise application software revenue by 2035, surpassing $450 billion. McKinsey's 2025 State of AI survey reinforces the commercial stakes: McKinsey estimates agentic AI could add between $2.6 trillion and $4.4 trillion in annual value across business use cases at scale.

Three forces are making this possible now:

The transition from AI pilots to AI operations is not automatic. Organizations that move from experimentation to production deployment share a set of consistent strategic practices.

AI customer service tools represent the clearest near-term application of agentic AI for most businesses. The distinction from first-generation chatbots is not incremental — it is architectural. An AI agent deployed in a customer support context does not answer "where is my order?" and stop. It retrieves the order status, identifies a shipping exception, contacts the logistics provider via API, updates the customer proactively, and logs the resolution — all without routing to a human queue.

The competitive implication is direct. As of mid-2026, 54% of enterprises report running AI agents in production environments. Businesses that have not begun integrating agentic AI into their customer operations are not competing on equal terms — they are absorbing cost structures that their faster-moving competitors have already begun to eliminate.

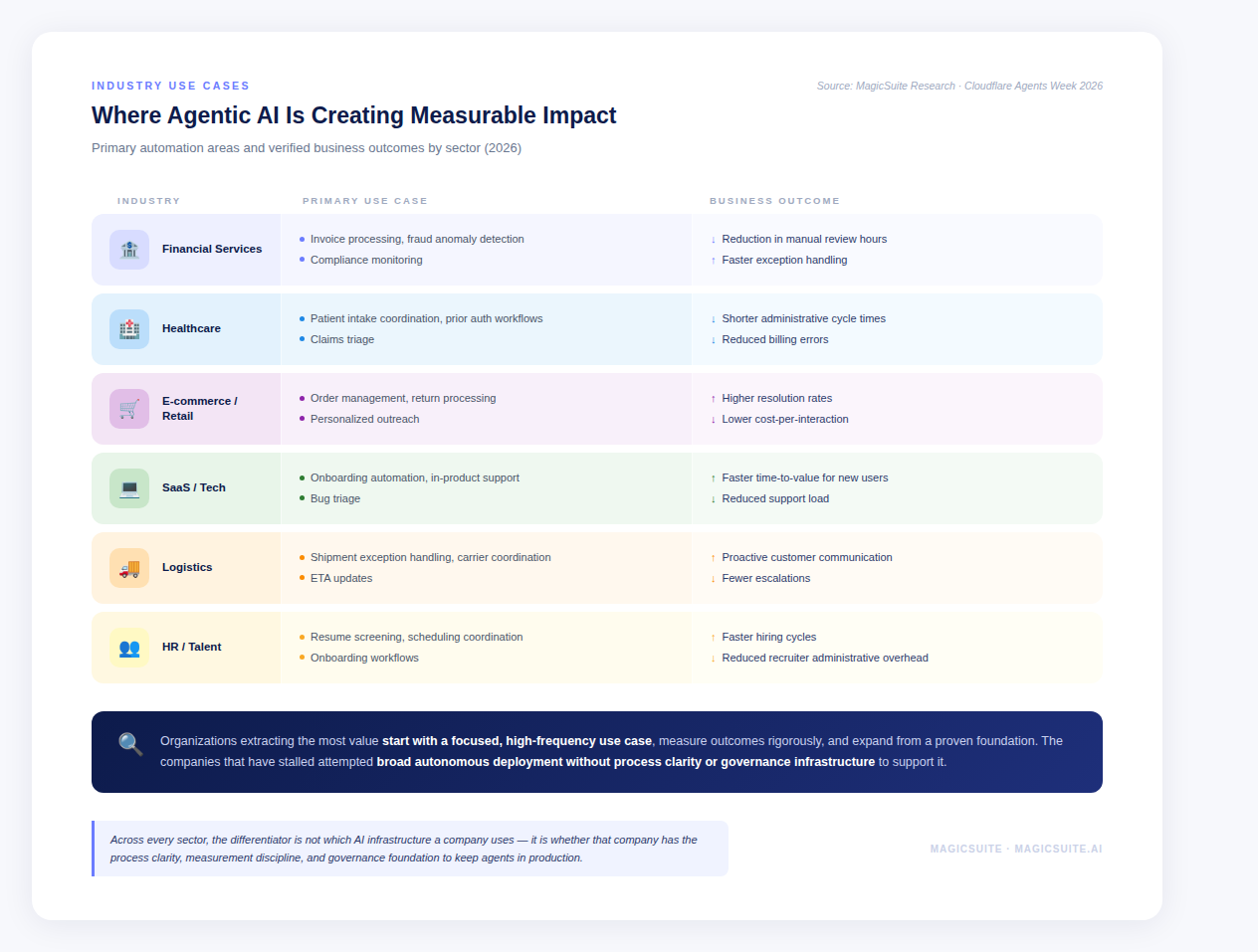

Customer-facing use cases attract most of the attention, but the operational leverage from agentic AI in internal workflows is comparable and in some industries, larger. Finance and operations teams are deploying agents for invoice matching, expense auditing, and cash flow forecasting. HR teams are using them for resume screening and interview coordination. Compliance teams are running agents for policy monitoring and anomaly detection.

McKinsey's high-performing organizations, the 6% of companies where more than 5% of EBIT is attributable to AI, are three times more advanced in agent deployment than peers and consistently invest more than 20% of digital budgets in AI. The operational moat these organizations are building is not about having better tools. It is about having automated the repetitive, rules-based work that fills their competitors' cost structures.

As AI agents gain the ability to take action, submitting forms, executing transactions, querying sensitive databases, the risk exposure grows proportionally. Gartner's warning is direct: more than 40% of agentic AI projects are at risk of cancellation by 2027 due to inadequate governance, observability, and ROI clarity. The enterprises scaling fastest in 2026 built audit trails, access controls, and permission frameworks before scaling agent autonomy, not after. For decision-makers evaluating AI agents, data governance is not a compliance checkbox at the end of the implementation process. It is the architectural foundation the rest of the deployment sits on. Cloudflare Mesh and Zero Trust integration address part of this at the infrastructure level, but the organizational policies and system access frameworks must be defined internally.

Woori Bank Capital's deployment of AI-powered customer service at scale reflects a pattern visible across these sectors: organizations that started with a focused, high-frequency use case, measured outcomes rigorously, and expanded from a proven foundation. The companies that have stalled are those that attempted broad autonomous deployment without the process clarity or governance infrastructure to support it.

Cloudflare's Agents Week 2026 resolved a key objection that enterprise technology teams had maintained through 2025: the infrastructure is not ready for production-grade agent deployment. Dynamic Workers, Cloudflare Mesh, the unified model layer, persistent memory, MCP support, and the expanded Agents SDK collectively close that gap.

What Cloudflare cannot resolve is the organizational readiness gap. McKinsey's 2025 State of AI report found that while AI adoption is near-universal, less than 10% of organizations have successfully scaled AI agents in any individual function. The constraint is not technical access. It is the absence of clear process mapping, defined success metrics, and governance frameworks that would make scaling responsible.

This is the decisive factor in 2026. As infrastructure commoditizes and it is commoditizing rapidly, the competitive advantage will accrue to the organizations with the sharpest process clarity: they know which workflows to automate, they can measure the outcome, and they have the governance in place to expand confidently.

Before investing in agent deployment infrastructure, business leaders should be able to answer the following:

Luke is a technical market researcher with a deep passion for analyzing emerging technologies and their market impact. With a keen eye for data and trends, Luke provides valuable insights that help shape strategic decisions and product innovations. His expertise lies in evaluating industry developments and uncovering key opportunities in the ever-evolving tech landscape.